Datasets

- CVPR 2023: 5th Workshop and Competition on Affective Behavior Analysis in-the-wild (ABAW)

- ECCV 2022: 4th Workshop and Competition on Affective Behavior Analysis in-the-wild (ABAW)

- CVPR 2022: 3rd Workshop and Competition on Affective Behavior Analysis in-the-wild (ABAW)

- ICCV 2021: 2nd Workshop and Competition on Affective Behavior Analysis in-the-wild (ABAW)

- Synthesizing Coupled 3D Face Modalities by TBGAN

- Face Bio-metrics under COVID (Masked Face Recognition Challenge & Workshop ICCV 2021)

- First Affect-in-the-Wild Challenge

- Aff-Wild2 database

- FG-2020 Competition: Affective Behavior Analysis in-the-wild (ABAW)

- First Faces in-the-wild Workshop-Challenge

- In-The-Wild 3D Morphable Models: Code and Data

- Sound of Pixels

- Lightweight Face Recognition Challenge & Workshop (ICCV 2019)

- Audiovisual Database of Normal-Whispered-Silent Speech

- Deformable Models of Ears in-the-wild for Alignment and Recognition

- 300 Videos in the Wild (300-VW) Challenge & Workshop (ICCV 2015)

- 1st 3D Face Tracking in-the-wild Competition

- The Fabrics Dataset

- The Mobiface Dataset

- Large Scale Facial Model (LSFM)

- AgeDB

- AFEW-VA Database for Valence and Arousal Estimation In-The-Wild

- The CONFER Database

- Special Issue on Behavior Analysis "in-the-wild"

- Body Pose Annotations Correction (CVPR 2016)

- KANFace

- MeDigital

- FG-2020 Workshop "Affect Recognition in-the-wild: Uni/Multi-Modal Analysis & VA-AU-Expression Challenges"

- 4DFAB: A Large Scale 4D Face Database for Expression Analysis and Biometric Applications

- Affect "in-the-wild" Workshop

- 2nd Facial Landmark Localisation Competition - The Menpo BenchMark

- Facial Expression Recognition and Analysis Challenge 2015

- The SEWA Database

- Mimic Me

- 300 Faces In-The-Wild Challenge (300-W), IMAVIS 2014

- MAHNOB-HCI-Tagging database

- 300 Faces In-the-Wild Challenge (300-W), ICCV 2013

- MAHNOB Laughter database

- MAHNOB MHI-Mimicry database

- Facial point annotations

- MMI Facial expression database

- SEMAINE database

- iBugMask: Face Parsing in the Wild (ImaVis 2021)

- iBUG Eye Segmentation Dataset

Code

- Valence/Arousal Online Annotation Tool

- The Menpo Project

- The Dynamic Ordinal Classification (DOC) Toolbox

- Gauss-Newton Deformable Part Models for Face Alignment in-the-Wild (CVPR 2014)

- Robust and Efficient Parametric Face/Object Alignment (2011)

- Discriminative Response Map Fitting (DRMF 2013)

- End-to-End Lipreading

- DS-GPLVM (TIP 2015)

- Subspace Learning from Image Gradient Orientations (2011)

- Discriminant Incoherent Component Analysis (IEEE-TIP 2016)

- AOMs Generic Face Alignment (2012)

- Fitting AAMs in-the-Wild (ICCV 2013)

- Salient Point Detector (2006/2008)

- Facial point detector (2010/2013)

- Chehra Face Tracker (CVPR 2014)

- Empirical Analysis Of Cascade Deformable Models For Multi-View Face Detection (IMAVIS 2015)

- Continuous-time Prediction of Dimensional Behavior/Affect

- Real-time Face tracking with CUDA (MMSys 2014)

- Facial Point detector (2005/2007)

- Facial tracker (2011)

- Salient Point Detector (2010)

- AU detector (TAUD 2011)

- Action Unit Detector (2016)

- AU detector (LAUD 2010)

- Smile Detectors

- Head Nod Shake Detector (2010/2011)

- Gesture Detector (2011)

- Head Nod Shake Detector and 5 Dimensional Emotion Predictor (2010/2011)

- Gesture Detector (2010)

- HCI^2 Framework

- FROG Facial Tracking Component

- SEMAINE Visual Components (2008/2009)

- SEMAINE Visual Components (2009/2010)

2nd Facial Landmark Localisation Competition - The Menpo BenchMark

Latest News

- For any requests, e.g. regarding the zipped files, please send your requests to the competition e-mail (mentioned as 'Workshop Administrator' towards the end of this page).

- Training/testing data and the evaluation toolkit can be downloaded from here.

- If you use the above data please cite the following papers:

-

J Deng, A Roussos, G Chrysos, E Ververas, I Kotsia, J Shen, S Zafeiriou, "The Menpo benchmark for multi-pose 2D and 3D facial landmark localisation and tracking". IJCV, 2018

S. Zafeiriou, G. Trigeorgis, G. Chrysos, J. Deng and J. Shen. "The Menpo Facial Landmark Localisation Challenge: A step closer to the solution.", CVPRW, 2017.

G. Trigeorgis, P. Snape, M. Nicolaou, E. Antonakos and S. Zafeiriou. "Mnemonic descent method: A recurrent process applied for end-to-end face alignment.", CVPR, 2016.

-

MENPO DATA

The Menpo Facial Landmark Localisation in-the-Wild Challenge & Workshop to be held in conjunction with International Conference on Computer Vision & Pattern Recognition (CVPR) 2017, Hawaii, USA.

Organisers

Chairs:

Stefanos Zafeiriou, Imperial College London, UK s.zafeiriou@imperial.ac.uk

Jiakang Deng Imperial College London, UK j.deng16@imperial.ac.uk

Maja Pantic, Imperial College London, UK m.pantic@imperial.ac.uk

Data Chairs:

Grigorios Chrysos, Imperial College London, UK g.chrysos@imperial.ac.uk

George Trigeorgis Imperial College London, UK george.trigeorgis08@imperial.ac.uk

Jie Shen Imperial College London, UK jie.shen07@imperial.ac.uk

Scope

Currently comprehensive benchmarks exist for facial landmark localization and tracking (see 300W [1] and 300VW [5] challenges). Nevertheless, these benchmarks are mainly about (near) frontal faces. In CVPR 2017, we make a significant step further and present a new comprehensive multi-pose benchmark, as well as organize a workshop-challenge for landmark detection in images displaying arbitrary poses. To this end we have annotated a large set of profile faces with 39 fiducial points. Furthermore, we have annotated many new images of (near) frontal faces using the standard 68 point markup. The challenge will represent the very first thorough quantitative evaluation on multipose face landmark detection. Furthermore, the competition will explore how far we are from attaining satisfactory facial landmark localisation in arbitrary poses. The results of the Challenge will be presented at the Faces " in-the-wild" (Wild-Face) Workshop to be held in conjunction with CVPR 2017.

The MENPO Challenge

In order to develop a comprehensive benchmark for evaluating facial landmark localisation

algorithms in the wild in arbitrary poses, we have annotated both (near) frontal, as well as profile faces

in the wild. To this end, we annotated (a) many new in-the-wild (near) frontal images

with regards to the same mark-up (i.e. set of facial landmarks) used in the 300 W competition

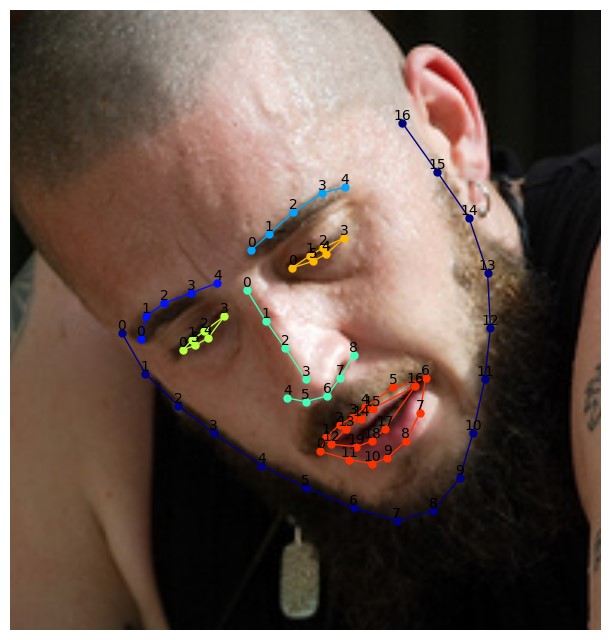

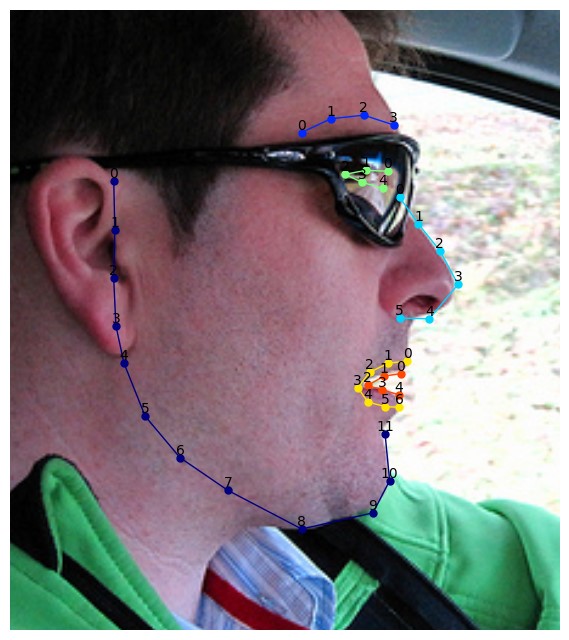

[1,2] (a total of 68 landmarks, please see Fig. 1) and (b) many in-the-wild profile facial images using a 39 landmakrs mark-up, please see Fig. 2

Fig. 1: The 68 points mark-up used for our annotations in near-frontal faces.

Fig. 2: The 39 points mark-up used for our annotations in profile faces.

Training

The training facial samples and annotations are available to download from here. Participants will be able to train their facial landmark tracking algorithms using the above training set and the data from 300W competition.

The training data contain tha facial images and their corresponding annotation (.pts file).

- The dataset is available for non-commercial research purposes only.

- All the training images of the dataset are obtained from the FDDB and AFLW databases (please cite the corresponding papers when you are using them). We are not responsible for the content nor the meaning of these images.

- You agree not to reproduce, duplicate, copy, sell, trade, resell or exploit for any commercial purposes, any portion of the images and any portion of derived data.

- You agree not to further copy, publish or distribute any portion of annotations of the dataset. Except, for internal use at a single site within the same organization it is allowed to make copies of the dataset.

- We reserve the right to terminate your access to the dataset at any time.

Testing

Participants will have their algorithms tested on other facial in-the-wild images which will be provided in a predefined date (see below). This dataset aims at testing the ability of current systems for fitting unseen subjects, independently of variations in pose, expression, illumination, background, occlusion, and image quality.

The test data are available here.

A winner for each category (profile and (near) frontal) will be announced. Participants do not need to submit the executable to the organisers but only the results on the test images. The participants can take part in one or more of the aforementioned, (near) frontal or profile challenges. The Menpo Challenge organisers will not take part in the competition. The test set images are similar in nature to those of Menpo training set.

Performance Assessment

Fitting performance will be assessed on the same mark-up provided for the training using well-known error

measures. In particular, the average Euclidean point-to-point error normalized distance will be used [1,2] (normalised appropriately for profile and frontal images). Matlab code for calculating the error can be downloaded here. The error will be calculated over (a) all landmarks, and (b) the facial feature landmarks (eyebrows, eyes, nose, and mouth). The cumulative curve corresponding to the percentage of test images for which the error was less than a specific value will be produced. Finally, these results will then be returned to the participants for inclusion in their papers. Benchmark results of a standard approach of generic face detection plus generic facial landmark detection will be used (e.g., Viola Jones plus Active Appearance Models [3]).

The authors acknowledge that if they decide to submit, the resulting curve might be used by the organisers in any related visualisations/results. The authors are prohibited from sharing the results with other contesting teams.

The organisers cannot publish the resulting pts of the participants without their consent. Only one final submission per team will be accepted for each team to avoid overfitting the testset. This also means that the participants should use their own validation set, should they wish to test their algorithms and not try to submit multiple times. Each image contains a single face annotated and it is the one that is closer to the centre of the image.

Faces in-the-wild 2017 Workshop

Our aim is to accept up to 10 papers to be orally presented at the workshop.

Submission Information:

Challenge participants should submit a paper to the 300-VW Workshop, which summarizes the methodology and the achieved performance of their algorithm. Submissions should adhere to the main CVPR 2017 proceedings style. The workshop papers will be published in the CVPR 2017 proceedings. Please sign up in the submissions system to submit your paper.

Important Dates:

- 27 January: Announcement of the Challenges

- 30 January: Release of the training videos

- 22 March: Release of the test data

- 31 March: Deadline of returning results

- 2 April: Return of the results to authors

- 20 April: Deadline for paper submission

- 27 April: Decisions

- 19 May: Camera-ready deadline

- 26 July: Workshop date

Contact:

Workshop Administrator: menpo.challenge@gmail.com

References

[1] C. Sagonas, G. Tzimiropoulos, S. Zafeiriou, & M. Pantic, (2013, December). 300 faces in-the-wild

challenge: The first facial landmark localization challenge. In Computer Vision Workshops (ICCVW), 2013

IEEE International Conference on (pp. 397-403).

[2] Shen, J., Zafeiriou, S., Chrysos, G. G., Kossaifi, J., Tzimiropoulos, G., & Pantic, M. (2015, December). The first facial landmark tracking in-the-wild challenge: Benchmark and results. In Computer Vision Workshop (ICCVW), 2015 IEEE International Conference on (pp. 1003-1011). IEEE.

[3] G. Tzimiropoulos., J. Alabort., S. Zafeiriou., and M. Pantic, “Generic active appearance models revisited,”

ACCV ’12.

[4] R. Gross, I. Matthews, J. Cohn, T. Kanade, S. Baker. “Multi-pie,” IVC, 28(5):807–813, 2010

Program Committee:

- Jorge Batista, University of Coimbra (Portugal)

- Richard Bowden, University of Surrey (UK)

- Jeff Cohn, CMU/University of Pittsburgh (USA)

- Roland Goecke, University of Canberra (AU)

- Peter Corcoran, NUI Galway (Ireland)

- Fred Nicolls, University of Cape Town (South Africa)

- Mircea C. Ionita, Daon (Ireland)

- Ioannis Kakadiaris, University of Houston (USA)

- Stan Z. Li, Institute of Automation Chinese Academy of Sciences (China)

- Simon Lucey, CMU (USA)

- Iain Matthews, Disney Research (USA)

- Aleix Martinez, University of Ohio (USA)

- Dimitris Metaxas, Rutgers University (USA)

- Stephen Milborrow, sonic.net

- Louis P. Morency, University of South California (USA)

- Ioannis Patras, Queen Mary University (UK)

- Matti Pietikainen, University of Oulu (Finland)

- Deva Ramaman, University of Irvine (USA)

- Jason Saragih, Commonwealth Sc. & Industrial Research Organisation (AU)

- Nicu Sebe, University of Trento (Italy)

- Jian Sun, Microsoft Research Asia

- Xiaoou Tang, Chinese University of Hong Kong (China)

- Fernando De La Torre, Carnegie Mellon University (USA)

- Philip A. Tresadern, University of Manchester (UK)

- Michel Valstar, University of Nottingham (UK)

- Xiaogang Wang, Chinese University of Hong Kong (China)

- Fang Wen, Microsoft Research Asia

- Lijun Yin, Binghamton University (USA)

Sponsors:

The Faces "in-the-wild" Challenge & Workshop has been supported by H2020 Tesla project and the EPSRC projects FACE2VM and ADAManT and a Google Faculty Award.