Head-pose invariant affect analysis

Facial expression recognition has attracted significant attention because of its usefulness in many applications such as human-computer interaction, face animation, analysis of social interaction, etc. Most existing methods deal with images (or image sequences) in which the depicted persons are relatively still and exhibit posed expressions in nearly frontal view. However, most of real-world applications relate to spontaneous human-to-human interactions (e.g., meeting summarization, political debates analysis, etc.), in which the assumption of having immovable subjects is unrealistic. This calls for a joint analysis of head pose and facial expression. Nevertheless, this is a significant research challenge mainly due to the large variation in the appearance of facial expressions under different head poses and the difficulty in decoupling these two sources of variation.

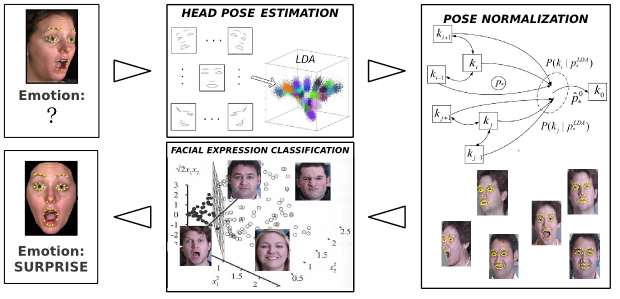

To address the problem of pose-invariant facial expression recognition, we exploit a three-step approach that is based on geometric features (i.e., the positions of the facial landmarks obtained by our point detector). In the first step, we perform head pose estimation by means of multi-class LDA trained on data belonging to a discrete set of head-poses. In the second step, we perform pose normalization by mapping the 2D positions of facial landmarks in non-frontal poses to the corresponding landmarks’ positions in the frontal pose. As these mappings are highly non-linear, we employ state-of-the-art regression models such as Gaussian Process Regression models to learn the mapping functions. Once we have normalized the pose, the last step of our approach is facial expression classification in the frontal pose attained using standard SVM classifier trained for the six basic emotions proposed by Ekman [1].

Involved group members

Maja Pantic, Stefanos Eleftheriadis, Mihalis A. Nicolaou, Ognjen Rudovic, Robert Walecki

Related Publications

-

Coupled Gaussian Processes for Pose-Invariant Facial Expression Recognition

O. Rudovic, M. Pantic, I. Patras. IEEE Transactions on Pattern Analysis and Machine Intelligence. 35(6): pp. 1357 - 1369, 2013.

Bibtex reference [hide]@article{RudovicEtAlPAMI12,

author = {O. Rudovic and M. Pantic and I. Patras},

pages = {1357--1369},

journal = {IEEE Transactions on Pattern Analysis and Machine Intelligence},

number = {6},

title = {Coupled Gaussian Processes for Pose-Invariant Facial Expression Recognition},

volume = {35},

year = {2013},

}Endnote reference [hide]%0 Journal Article

%T Coupled Gaussian Processes for Pose-Invariant Facial Expression Recognition

%A Rudovic, O.

%A Pantic, M.

%A Patras, I.

%J IEEE Transactions on Pattern Analysis and Machine Intelligence

%D 2013

%V 35

%N 6

%F RudovicEtAlPAMI12

%P 1357-1369 -

Shape-constrained Gaussian Process Regression for Facial-point-based Head-pose Normalization

O. Rudovic, M. Pantic. Proceedings of IEEE Int’l Conf. on Computer Vision (ICCV 2011). pp. 1495 - 1502, November 2011.

Bibtex reference [hide]@inproceedings{Rudovic2011iccv2,

author = {O. Rudovic and M. Pantic},

pages = {1495--1502},

booktitle = {Proceedings of IEEE Int’l Conf. on Computer Vision (ICCV 2011)},

month = {November},

title = {Shape-constrained Gaussian Process Regression for Facial-point-based Head-pose Normalization},

year = {2011},

}Endnote reference [hide]%0 Conference Proceedings

%T Shape-constrained Gaussian Process Regression for Facial-point-based Head-pose Normalization

%A Rudovic, O.

%A Pantic, M.

%B Proceedings of IEEE Int’l Conf. on Computer Vision (ICCV 2011)

%D 2011

%8 November

%F Rudovic2011iccv2

%P 1495-1502 -

Coupled Gaussian Process Regression for pose-invariant facial expression recognition

O. Rudovic, I. Patras, M. Pantic. Proceedings of European Conf. Computer Vision (ECCV'10). Heraklion, Crete, Greece, pp. 350 - 363, September 2010.

Bibtex reference [hide]@inproceedings{Rudovic2010cgprf,

author = {O. Rudovic and I. Patras and M. Pantic},

pages = {350--363},

address = {Heraklion, Crete, Greece},

booktitle = {Proceedings of European Conf. Computer Vision (ECCV'10)},

month = {September},

title = {Coupled Gaussian Process Regression for pose-invariant facial expression recognition},

year = {2010},

}Endnote reference [hide]%0 Conference Proceedings

%T Coupled Gaussian Process Regression for pose-invariant facial expression recognition

%A Rudovic, O.

%A Patras, I.

%A Pantic, M.

%B Proceedings of European Conf. Computer Vision (ECCV?10)

%D 2010

%8 September

%C Heraklion, Crete, Greece

%F Rudovic2010cgprf

%P 350-363 -

Regression-based multi-view facial expression recognition

O. Rudovic, I. Patras, M. Pantic. Proceedings of Int'l Conf. Pattern Recognition (ICPR'10). Istanbul, Turkey, pp. 4121 - 4124, August 2010.

Bibtex reference [hide]@inproceedings{Rudovic2010rmfer,

author = {O. Rudovic and I. Patras and M. Pantic},

pages = {4121--4124},

address = {Istanbul, Turkey},

booktitle = {Proceedings of Int'l Conf. Pattern Recognition (ICPR'10)},

month = {August},

title = {Regression-based multi-view facial expression recognition},

year = {2010},

}Endnote reference [hide]%0 Conference Proceedings

%T Regression-based multi-view facial expression recognition

%A Rudovic, O.

%A Patras, I.

%A Pantic, M.

%B Proceedings of Int?l Conf. Pattern Recognition (ICPR?10)

%D 2010

%8 August

%C Istanbul, Turkey

%F Rudovic2010rmfer

%P 4121-4124 -

Facial Expression Invariant Head Pose Normalization using Gaussian Process Regression

O. Rudovic, I. Patras, M. Pantic. Proceedings of IEEE Int'l Conf. Computer Vision and Pattern Recognition (CVPR-W'10). San Francisco, USA, 3: pp. 28 - 33, June 2010.

Bibtex reference [hide]@inproceedings{Rudovic2010feihp,

author = {O. Rudovic and I. Patras and M. Pantic},

pages = {28--33},

address = {San Francisco, USA},

booktitle = {Proceedings of IEEE Int'l Conf. Computer Vision and Pattern Recognition (CVPR-W'10)},

month = {June},

title = {Facial Expression Invariant Head Pose Normalization using Gaussian Process Regression},

volume = {3},

year = {2010},

}Endnote reference [hide]%0 Conference Proceedings

%T Facial Expression Invariant Head Pose Normalization using Gaussian Process Regression

%A Rudovic, O.

%A Patras, I.

%A Pantic, M.

%B Proceedings of IEEE Int?l Conf. Computer Vision and Pattern Recognition (CVPR-W?10)

%D 2010

%8 June

%V 3

%C San Francisco, USA

%F Rudovic2010feihp

%P 28-33